Hypothesis Testing Calculator

Related: confidence interval calculator, type ii error.

The first step in hypothesis testing is to calculate the test statistic. The formula for the test statistic depends on whether the population standard deviation (σ) is known or unknown. If σ is known, our hypothesis test is known as a z test and we use the z distribution. If σ is unknown, our hypothesis test is known as a t test and we use the t distribution. Use of the t distribution relies on the degrees of freedom, which is equal to the sample size minus one. Furthermore, if the population standard deviation σ is unknown, the sample standard deviation s is used instead. To switch from σ known to σ unknown, click on $\boxed{\sigma}$ and select $\boxed{s}$ in the Hypothesis Testing Calculator.

Next, the test statistic is used to conduct the test using either the p-value approach or critical value approach. The particular steps taken in each approach largely depend on the form of the hypothesis test: lower tail, upper tail or two-tailed. The form can easily be identified by looking at the alternative hypothesis (H a ). If there is a less than sign in the alternative hypothesis then it is a lower tail test, greater than sign is an upper tail test and inequality is a two-tailed test. To switch from a lower tail test to an upper tail or two-tailed test, click on $\boxed{\geq}$ and select $\boxed{\leq}$ or $\boxed{=}$, respectively.

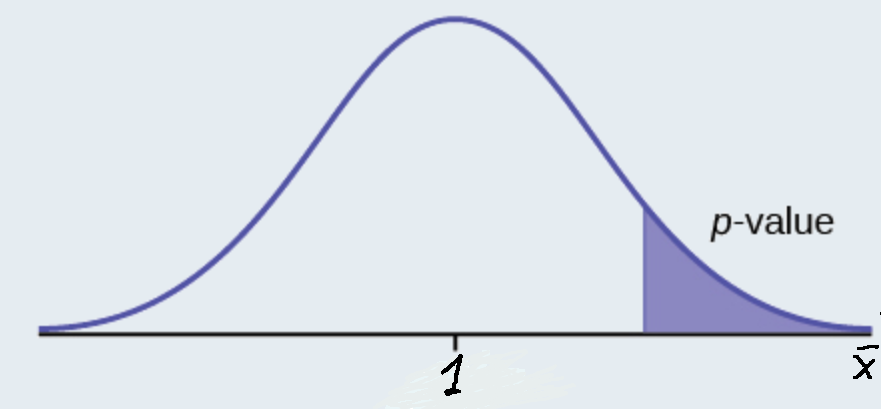

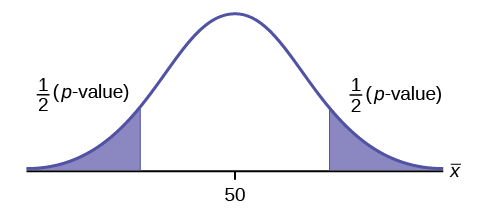

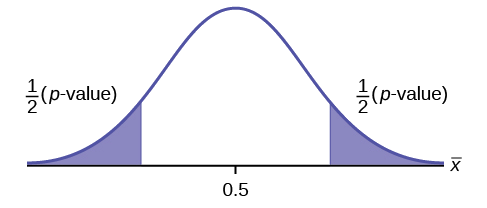

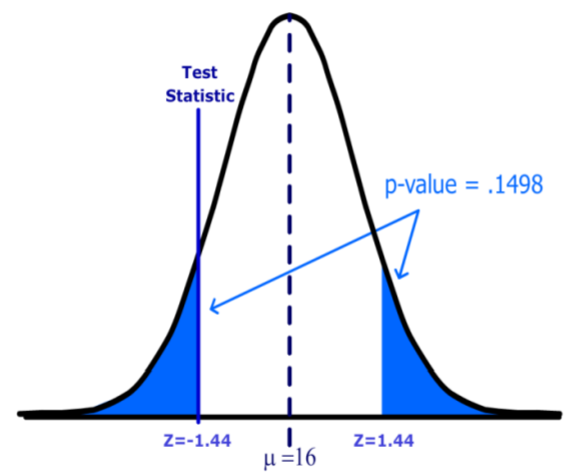

In the p-value approach, the test statistic is used to calculate a p-value. If the test is a lower tail test, the p-value is the probability of getting a value for the test statistic at least as small as the value from the sample. If the test is an upper tail test, the p-value is the probability of getting a value for the test statistic at least as large as the value from the sample. In a two-tailed test, the p-value is the probability of getting a value for the test statistic at least as unlikely as the value from the sample.

To test the hypothesis in the p-value approach, compare the p-value to the level of significance. If the p-value is less than or equal to the level of signifance, reject the null hypothesis. If the p-value is greater than the level of significance, do not reject the null hypothesis. This method remains unchanged regardless of whether it's a lower tail, upper tail or two-tailed test. To change the level of significance, click on $\boxed{.05}$. Note that if the test statistic is given, you can calculate the p-value from the test statistic by clicking on the switch symbol twice.

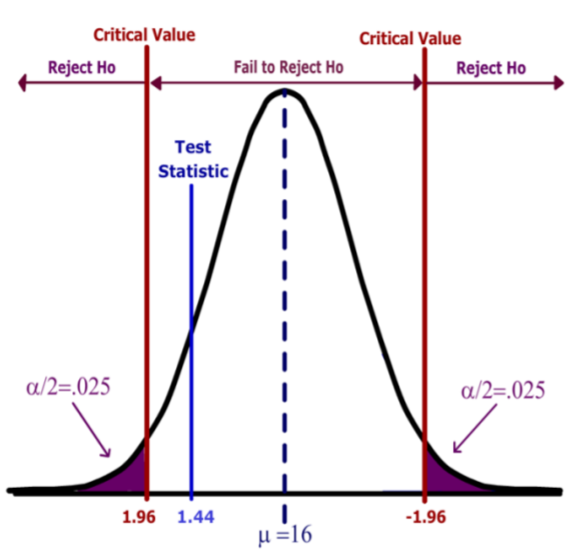

In the critical value approach, the level of significance ($\alpha$) is used to calculate the critical value. In a lower tail test, the critical value is the value of the test statistic providing an area of $\alpha$ in the lower tail of the sampling distribution of the test statistic. In an upper tail test, the critical value is the value of the test statistic providing an area of $\alpha$ in the upper tail of the sampling distribution of the test statistic. In a two-tailed test, the critical values are the values of the test statistic providing areas of $\alpha / 2$ in the lower and upper tail of the sampling distribution of the test statistic.

To test the hypothesis in the critical value approach, compare the critical value to the test statistic. Unlike the p-value approach, the method we use to decide whether to reject the null hypothesis depends on the form of the hypothesis test. In a lower tail test, if the test statistic is less than or equal to the critical value, reject the null hypothesis. In an upper tail test, if the test statistic is greater than or equal to the critical value, reject the null hypothesis. In a two-tailed test, if the test statistic is less than or equal the lower critical value or greater than or equal to the upper critical value, reject the null hypothesis.

When conducting a hypothesis test, there is always a chance that you come to the wrong conclusion. There are two types of errors you can make: Type I Error and Type II Error. A Type I Error is committed if you reject the null hypothesis when the null hypothesis is true. Ideally, we'd like to accept the null hypothesis when the null hypothesis is true. A Type II Error is committed if you accept the null hypothesis when the alternative hypothesis is true. Ideally, we'd like to reject the null hypothesis when the alternative hypothesis is true.

Hypothesis testing is closely related to the statistical area of confidence intervals. If the hypothesized value of the population mean is outside of the confidence interval, we can reject the null hypothesis. Confidence intervals can be found using the Confidence Interval Calculator . The calculator on this page does hypothesis tests for one population mean. Sometimes we're interest in hypothesis tests about two population means. These can be solved using the Two Population Calculator . The probability of a Type II Error can be calculated by clicking on the link at the bottom of the page.

Teach yourself statistics

Hypothesis Test for a Mean

This lesson explains how to conduct a hypothesis test of a mean, when the following conditions are met:

- The sampling method is simple random sampling .

- The sampling distribution is normal or nearly normal.

Generally, the sampling distribution will be approximately normally distributed if any of the following conditions apply.

- The population distribution is normal.

- The population distribution is symmetric , unimodal , without outliers , and the sample size is 15 or less.

- The population distribution is moderately skewed , unimodal, without outliers, and the sample size is between 16 and 40.

- The sample size is greater than 40, without outliers.

This approach consists of four steps: (1) state the hypotheses, (2) formulate an analysis plan, (3) analyze sample data, and (4) interpret results.

State the Hypotheses

Every hypothesis test requires the analyst to state a null hypothesis and an alternative hypothesis . The hypotheses are stated in such a way that they are mutually exclusive. That is, if one is true, the other must be false; and vice versa.

The table below shows three sets of hypotheses. Each makes a statement about how the population mean μ is related to a specified value M . (In the table, the symbol ≠ means " not equal to ".)

The first set of hypotheses (Set 1) is an example of a two-tailed test , since an extreme value on either side of the sampling distribution would cause a researcher to reject the null hypothesis. The other two sets of hypotheses (Sets 2 and 3) are one-tailed tests , since an extreme value on only one side of the sampling distribution would cause a researcher to reject the null hypothesis.

Formulate an Analysis Plan

The analysis plan describes how to use sample data to accept or reject the null hypothesis. It should specify the following elements.

- Significance level. Often, researchers choose significance levels equal to 0.01, 0.05, or 0.10; but any value between 0 and 1 can be used.

- Test method. Use the one-sample t-test to determine whether the hypothesized mean differs significantly from the observed sample mean.

Analyze Sample Data

Using sample data, conduct a one-sample t-test. This involves finding the standard error, degrees of freedom, test statistic, and the P-value associated with the test statistic.

SE = s * sqrt{ ( 1/n ) * [ ( N - n ) / ( N - 1 ) ] }

SE = s / sqrt( n )

- Degrees of freedom. The degrees of freedom (DF) is equal to the sample size (n) minus one. Thus, DF = n - 1.

t = ( x - μ) / SE

- P-value. The P-value is the probability of observing a sample statistic as extreme as the test statistic. Since the test statistic is a t statistic, use the t Distribution Calculator to assess the probability associated with the t statistic, given the degrees of freedom computed above. (See sample problems at the end of this lesson for examples of how this is done.)

Sample Size Calculator

As you probably noticed, the process of hypothesis testing can be complex. When you need to test a hypothesis about a mean score, consider using the Sample Size Calculator. The calculator is fairly easy to use, and it is free. You can find the Sample Size Calculator in Stat Trek's main menu under the Stat Tools tab. Or you can tap the button below.

Interpret Results

If the sample findings are unlikely, given the null hypothesis, the researcher rejects the null hypothesis. Typically, this involves comparing the P-value to the significance level , and rejecting the null hypothesis when the P-value is less than the significance level.

Test Your Understanding

In this section, two sample problems illustrate how to conduct a hypothesis test of a mean score. The first problem involves a two-tailed test; the second problem, a one-tailed test.

Problem 1: Two-Tailed Test

An inventor has developed a new, energy-efficient lawn mower engine. He claims that the engine will run continuously for 5 hours (300 minutes) on a single gallon of regular gasoline. From his stock of 2000 engines, the inventor selects a simple random sample of 50 engines for testing. The engines run for an average of 295 minutes, with a standard deviation of 20 minutes. Test the null hypothesis that the mean run time is 300 minutes against the alternative hypothesis that the mean run time is not 300 minutes. Use a 0.05 level of significance. (Assume that run times for the population of engines are normally distributed.)

Solution: The solution to this problem takes four steps: (1) state the hypotheses, (2) formulate an analysis plan, (3) analyze sample data, and (4) interpret results. We work through those steps below:

Null hypothesis: μ = 300

Alternative hypothesis: μ ≠ 300

- Formulate an analysis plan . For this analysis, the significance level is 0.05. The test method is a one-sample t-test .

SE = s / sqrt(n) = 20 / sqrt(50) = 20/7.07 = 2.83

DF = n - 1 = 50 - 1 = 49

t = ( x - μ) / SE = (295 - 300)/2.83 = -1.77

where s is the standard deviation of the sample, x is the sample mean, μ is the hypothesized population mean, and n is the sample size.

Since we have a two-tailed test , the P-value is the probability that the t statistic having 49 degrees of freedom is less than -1.77 or greater than 1.77. We use the t Distribution Calculator to find P(t < -1.77) is about 0.04.

- If you enter 1.77 as the sample mean in the t Distribution Calculator, you will find the that the P(t < 1.77) is about 0.04. Therefore, P(t > 1.77) is 1 minus 0.96 or 0.04. Thus, the P-value = 0.04 + 0.04 = 0.08.

- Interpret results . Since the P-value (0.08) is greater than the significance level (0.05), we cannot reject the null hypothesis.

Note: If you use this approach on an exam, you may also want to mention why this approach is appropriate. Specifically, the approach is appropriate because the sampling method was simple random sampling, the population was normally distributed, and the sample size was small relative to the population size (less than 5%).

Problem 2: One-Tailed Test

Bon Air Elementary School has 1000 students. The principal of the school thinks that the average IQ of students at Bon Air is at least 110. To prove her point, she administers an IQ test to 20 randomly selected students. Among the sampled students, the average IQ is 108 with a standard deviation of 10. Based on these results, should the principal accept or reject her original hypothesis? Assume a significance level of 0.01. (Assume that test scores in the population of engines are normally distributed.)

Null hypothesis: μ >= 110

Alternative hypothesis: μ < 110

- Formulate an analysis plan . For this analysis, the significance level is 0.01. The test method is a one-sample t-test .

SE = s / sqrt(n) = 10 / sqrt(20) = 10/4.472 = 2.236

DF = n - 1 = 20 - 1 = 19

t = ( x - μ) / SE = (108 - 110)/2.236 = -0.894

Here is the logic of the analysis: Given the alternative hypothesis (μ < 110), we want to know whether the observed sample mean is small enough to cause us to reject the null hypothesis.

The observed sample mean produced a t statistic test statistic of -0.894. We use the t Distribution Calculator to find P(t < -0.894) is about 0.19.

- This means we would expect to find a sample mean of 108 or smaller in 19 percent of our samples, if the true population IQ were 110. Thus the P-value in this analysis is 0.19.

- Interpret results . Since the P-value (0.19) is greater than the significance level (0.01), we cannot reject the null hypothesis.

t-test Calculator

When to use a t-test, which t-test, how to do a t-test, p-value from t-test, t-test critical values, how to use our t-test calculator, one-sample t-test, two-sample t-test, paired t-test, t-test vs z-test.

Welcome to our t-test calculator! Here you can not only easily perform one-sample t-tests , but also two-sample t-tests , as well as paired t-tests .

Do you prefer to find the p-value from t-test, or would you rather find the t-test critical values? Well, this t-test calculator can do both! 😊

What does a t-test tell you? Take a look at the text below, where we explain what actually gets tested when various types of t-tests are performed. Also, we explain when to use t-tests (in particular, whether to use the z-test vs. t-test) and what assumptions your data should satisfy for the results of a t-test to be valid. If you've ever wanted to know how to do a t-test by hand, we provide the necessary t-test formula, as well as tell you how to determine the number of degrees of freedom in a t-test.

A t-test is one of the most popular statistical tests for location , i.e., it deals with the population(s) mean value(s).

There are different types of t-tests that you can perform:

- A one-sample t-test;

- A two-sample t-test; and

- A paired t-test.

In the next section , we explain when to use which. Remember that a t-test can only be used for one or two groups . If you need to compare three (or more) means, use the analysis of variance ( ANOVA ) method.

The t-test is a parametric test, meaning that your data has to fulfill some assumptions :

- The data points are independent; AND

- The data, at least approximately, follow a normal distribution .

If your sample doesn't fit these assumptions, you can resort to nonparametric alternatives. Visit our Mann–Whitney U test calculator or the Wilcoxon rank-sum test calculator to learn more. Other possibilities include the Wilcoxon signed-rank test or the sign test.

Your choice of t-test depends on whether you are studying one group or two groups:

One sample t-test

Choose the one-sample t-test to check if the mean of a population is equal to some pre-set hypothesized value .

The average volume of a drink sold in 0.33 l cans — is it really equal to 330 ml?

The average weight of people from a specific city — is it different from the national average?

Choose the two-sample t-test to check if the difference between the means of two populations is equal to some pre-determined value when the two samples have been chosen independently of each other.

In particular, you can use this test to check whether the two groups are different from one another .

The average difference in weight gain in two groups of people: one group was on a high-carb diet and the other on a high-fat diet.

The average difference in the results of a math test from students at two different universities.

This test is sometimes referred to as an independent samples t-test , or an unpaired samples t-test .

A paired t-test is used to investigate the change in the mean of a population before and after some experimental intervention , based on a paired sample, i.e., when each subject has been measured twice: before and after treatment.

In particular, you can use this test to check whether, on average, the treatment has had any effect on the population .

The change in student test performance before and after taking a course.

The change in blood pressure in patients before and after administering some drug.

So, you've decided which t-test to perform. These next steps will tell you how to calculate the p-value from t-test or its critical values, and then which decision to make about the null hypothesis.

Decide on the alternative hypothesis :

Use a two-tailed t-test if you only care whether the population's mean (or, in the case of two populations, the difference between the populations' means) agrees or disagrees with the pre-set value.

Use a one-tailed t-test if you want to test whether this mean (or difference in means) is greater/less than the pre-set value.

Compute your T-score value :

Formulas for the test statistic in t-tests include the sample size , as well as its mean and standard deviation . The exact formula depends on the t-test type — check the sections dedicated to each particular test for more details.

Determine the degrees of freedom for the t-test:

The degrees of freedom are the number of observations in a sample that are free to vary as we estimate statistical parameters. In the simplest case, the number of degrees of freedom equals your sample size minus the number of parameters you need to estimate . Again, the exact formula depends on the t-test you want to perform — check the sections below for details.

The degrees of freedom are essential, as they determine the distribution followed by your T-score (under the null hypothesis). If there are d degrees of freedom, then the distribution of the test statistics is the t-Student distribution with d degrees of freedom . This distribution has a shape similar to N(0,1) (bell-shaped and symmetric) but has heavier tails . If the number of degrees of freedom is large (>30), which generically happens for large samples, the t-Student distribution is practically indistinguishable from N(0,1).

💡 The t-Student distribution owes its name to William Sealy Gosset, who, in 1908, published his paper on the t-test under the pseudonym "Student". Gosset worked at the famous Guinness Brewery in Dublin, Ireland, and devised the t-test as an economical way to monitor the quality of beer. Cheers! 🍺🍺🍺

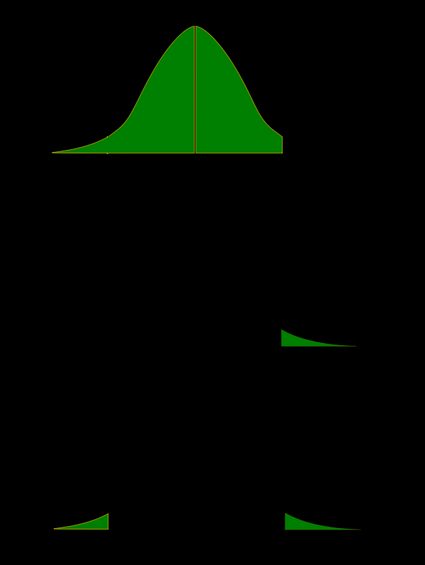

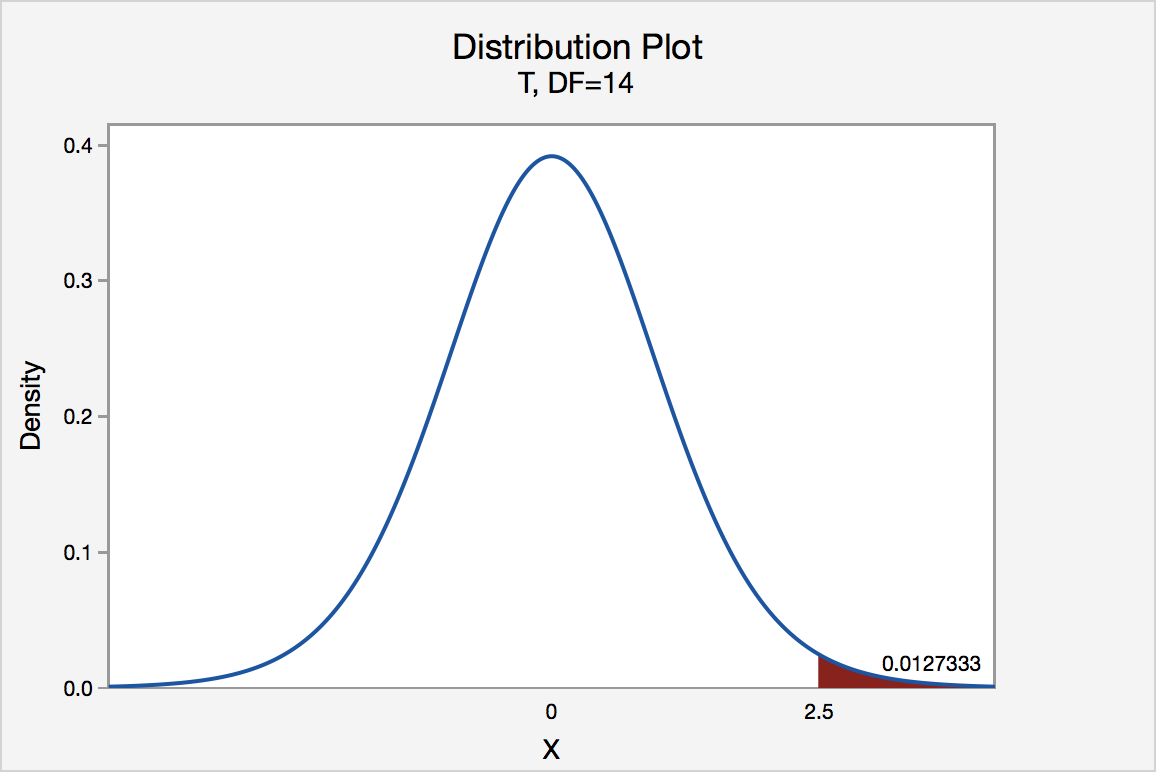

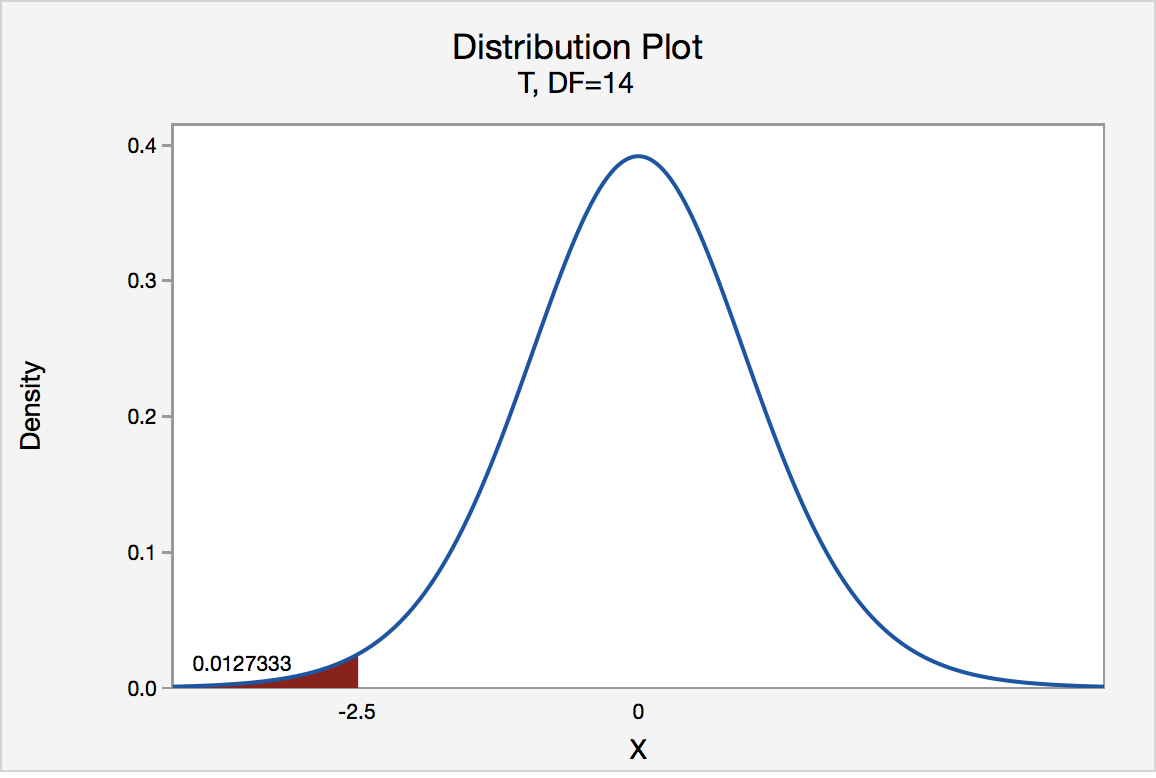

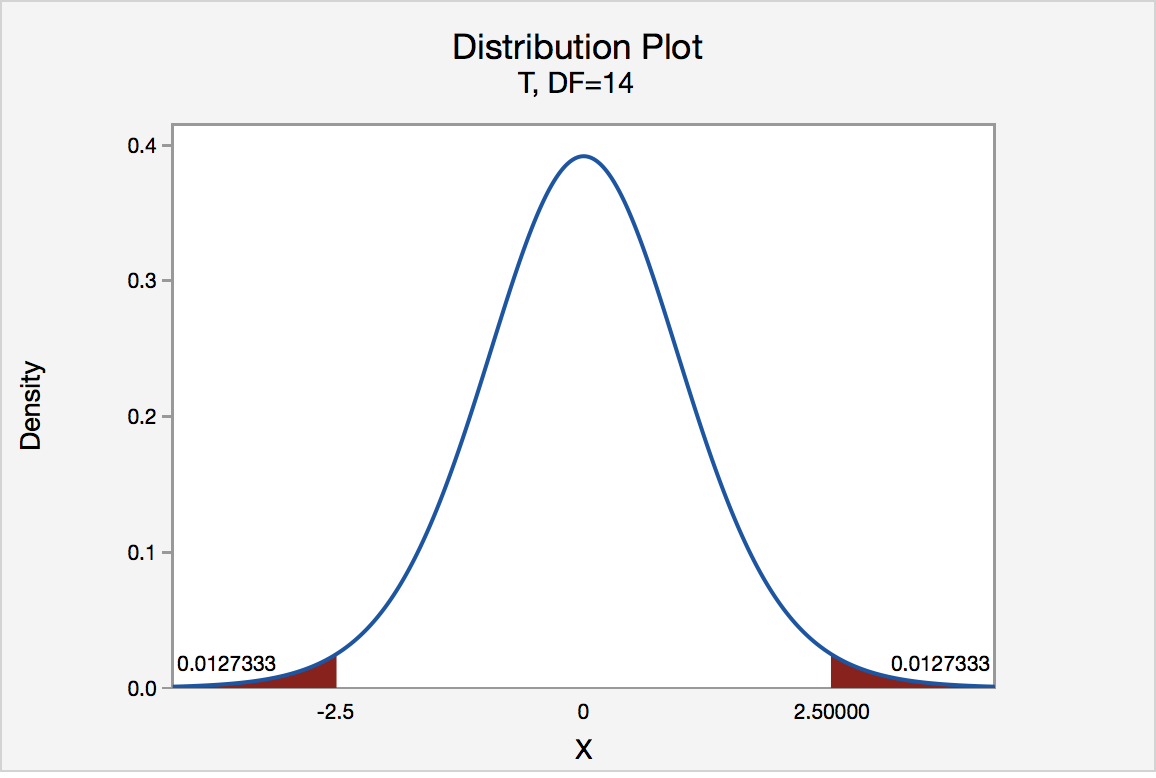

Recall that the p-value is the probability (calculated under the assumption that the null hypothesis is true) that the test statistic will produce values at least as extreme as the T-score produced for your sample . As probabilities correspond to areas under the density function, p-value from t-test can be nicely illustrated with the help of the following pictures:

The following formulae say how to calculate p-value from t-test. By cdf t,d we denote the cumulative distribution function of the t-Student distribution with d degrees of freedom:

p-value from left-tailed t-test:

p-value = cdf t,d (t score )

p-value from right-tailed t-test:

p-value = 1 − cdf t,d (t score )

p-value from two-tailed t-test:

p-value = 2 × cdf t,d (−|t score |)

or, equivalently: p-value = 2 − 2 × cdf t,d (|t score |)

However, the cdf of the t-distribution is given by a somewhat complicated formula. To find the p-value by hand, you would need to resort to statistical tables, where approximate cdf values are collected, or to specialized statistical software. Fortunately, our t-test calculator determines the p-value from t-test for you in the blink of an eye!

Recall, that in the critical values approach to hypothesis testing, you need to set a significance level, α, before computing the critical values , which in turn give rise to critical regions (a.k.a. rejection regions).

Formulas for critical values employ the quantile function of t-distribution, i.e., the inverse of the cdf :

Critical value for left-tailed t-test: cdf t,d -1 (α)

critical region:

(-∞, cdf t,d -1 (α)]

Critical value for right-tailed t-test: cdf t,d -1 (1-α)

[cdf t,d -1 (1-α), ∞)

Critical values for two-tailed t-test: ±cdf t,d -1 (1-α/2)

(-∞, -cdf t,d -1 (1-α/2)] ∪ [cdf t,d -1 (1-α/2), ∞)

To decide the fate of the null hypothesis, just check if your T-score lies within the critical region:

If your T-score belongs to the critical region , reject the null hypothesis and accept the alternative hypothesis.

If your T-score is outside the critical region , then you don't have enough evidence to reject the null hypothesis.

Choose the type of t-test you wish to perform:

A one-sample t-test (to test the mean of a single group against a hypothesized mean);

A two-sample t-test (to compare the means for two groups); or

A paired t-test (to check how the mean from the same group changes after some intervention).

Two-tailed;

Left-tailed; or

Right-tailed.

This t-test calculator allows you to use either the p-value approach or the critical regions approach to hypothesis testing!

Enter your T-score and the number of degrees of freedom . If you don't know them, provide some data about your sample(s): sample size, mean, and standard deviation, and our t-test calculator will compute the T-score and degrees of freedom for you .

Once all the parameters are present, the p-value, or critical region, will immediately appear underneath the t-test calculator, along with an interpretation!

The null hypothesis is that the population mean is equal to some value μ 0 \mu_0 μ 0 .

The alternative hypothesis is that the population mean is:

- different from μ 0 \mu_0 μ 0 ;

- smaller than μ 0 \mu_0 μ 0 ; or

- greater than μ 0 \mu_0 μ 0 .

One-sample t-test formula :

- μ 0 \mu_0 μ 0 — Mean postulated in the null hypothesis;

- n n n — Sample size;

- x ˉ \bar{x} x ˉ — Sample mean; and

- s s s — Sample standard deviation.

Number of degrees of freedom in t-test (one-sample) = n − 1 n-1 n − 1 .

The null hypothesis is that the actual difference between these groups' means, μ 1 \mu_1 μ 1 , and μ 2 \mu_2 μ 2 , is equal to some pre-set value, Δ \Delta Δ .

The alternative hypothesis is that the difference μ 1 − μ 2 \mu_1 - \mu_2 μ 1 − μ 2 is:

- Different from Δ \Delta Δ ;

- Smaller than Δ \Delta Δ ; or

- Greater than Δ \Delta Δ .

In particular, if this pre-determined difference is zero ( Δ = 0 \Delta = 0 Δ = 0 ):

The null hypothesis is that the population means are equal.

The alternate hypothesis is that the population means are:

- μ 1 \mu_1 μ 1 and μ 2 \mu_2 μ 2 are different from one another;

- μ 1 \mu_1 μ 1 is smaller than μ 2 \mu_2 μ 2 ; and

- μ 1 \mu_1 μ 1 is greater than μ 2 \mu_2 μ 2 .

Formally, to perform a t-test, we should additionally assume that the variances of the two populations are equal (this assumption is called the homogeneity of variance ).

There is a version of a t-test that can be applied without the assumption of homogeneity of variance: it is called a Welch's t-test . For your convenience, we describe both versions.

Two-sample t-test if variances are equal

Use this test if you know that the two populations' variances are the same (or very similar).

Two-sample t-test formula (with equal variances) :

where s p s_p s p is the so-called pooled standard deviation , which we compute as:

- Δ \Delta Δ — Mean difference postulated in the null hypothesis;

- n 1 n_1 n 1 — First sample size;

- x ˉ 1 \bar{x}_1 x ˉ 1 — Mean for the first sample;

- s 1 s_1 s 1 — Standard deviation in the first sample;

- n 2 n_2 n 2 — Second sample size;

- x ˉ 2 \bar{x}_2 x ˉ 2 — Mean for the second sample; and

- s 2 s_2 s 2 — Standard deviation in the second sample.

Number of degrees of freedom in t-test (two samples, equal variances) = n 1 + n 2 − 2 n_1 + n_2 - 2 n 1 + n 2 − 2 .

Two-sample t-test if variances are unequal (Welch's t-test)

Use this test if the variances of your populations are different.

Two-sample Welch's t-test formula if variances are unequal:

- s 1 s_1 s 1 — Standard deviation in the first sample;

- s 2 s_2 s 2 — Standard deviation in the second sample.

The number of degrees of freedom in a Welch's t-test (two-sample t-test with unequal variances) is very difficult to count. We can approximate it with the help of the following Satterthwaite formula :

Alternatively, you can take the smaller of n 1 − 1 n_1 - 1 n 1 − 1 and n 2 − 1 n_2 - 1 n 2 − 1 as a conservative estimate for the number of degrees of freedom.

🔎 The Satterthwaite formula for the degrees of freedom can be rewritten as a scaled weighted harmonic mean of the degrees of freedom of the respective samples: n 1 − 1 n_1 - 1 n 1 − 1 and n 2 − 1 n_2 - 1 n 2 − 1 , and the weights are proportional to the standard deviations of the corresponding samples.

As we commonly perform a paired t-test when we have data about the same subjects measured twice (before and after some treatment), let us adopt the convention of referring to the samples as the pre-group and post-group.

The null hypothesis is that the true difference between the means of pre- and post-populations is equal to some pre-set value, Δ \Delta Δ .

The alternative hypothesis is that the actual difference between these means is:

Typically, this pre-determined difference is zero. We can then reformulate the hypotheses as follows:

The null hypothesis is that the pre- and post-means are the same, i.e., the treatment has no impact on the population .

The alternative hypothesis:

- The pre- and post-means are different from one another (treatment has some effect);

- The pre-mean is smaller than the post-mean (treatment increases the result); or

- The pre-mean is greater than the post-mean (treatment decreases the result).

Paired t-test formula

In fact, a paired t-test is technically the same as a one-sample t-test! Let us see why it is so. Let x 1 , . . . , x n x_1, ... , x_n x 1 , ... , x n be the pre observations and y 1 , . . . , y n y_1, ... , y_n y 1 , ... , y n the respective post observations. That is, x i , y i x_i, y_i x i , y i are the before and after measurements of the i -th subject.

For each subject, compute the difference, d i : = x i − y i d_i := x_i - y_i d i := x i − y i . All that happens next is just a one-sample t-test performed on the sample of differences d 1 , . . . , d n d_1, ... , d_n d 1 , ... , d n . Take a look at the formula for the T-score :

Δ \Delta Δ — Mean difference postulated in the null hypothesis;

n n n — Size of the sample of differences, i.e., the number of pairs;

x ˉ \bar{x} x ˉ — Mean of the sample of differences; and

s s s — Standard deviation of the sample of differences.

Number of degrees of freedom in t-test (paired): n − 1 n - 1 n − 1

We use a Z-test when we want to test the population mean of a normally distributed dataset, which has a known population variance . If the number of degrees of freedom is large, then the t-Student distribution is very close to N(0,1).

Hence, if there are many data points (at least 30), you may swap a t-test for a Z-test, and the results will be almost identical. However, for small samples with unknown variance, remember to use the t-test because, in such cases, the t-Student distribution differs significantly from the N(0,1)!

🙋 Have you concluded you need to perform the z-test? Head straight to our z-test calculator !

What is a t-test?

A t-test is a widely used statistical test that analyzes the means of one or two groups of data. For instance, a t-test is performed on medical data to determine whether a new drug really helps.

What are different types of t-tests?

Different types of t-tests are:

- One-sample t-test;

- Two-sample t-test; and

- Paired t-test.

How to find the t value in a one sample t-test?

To find the t-value:

- Subtract the null hypothesis mean from the sample mean value.

- Divide the difference by the standard deviation of the sample.

- Multiply the resultant with the square root of the sample size.

Hypergeometric distribution

Ideal egg boiling, quartic regression.

- Biology (100)

- Chemistry (100)

- Construction (144)

- Conversion (295)

- Ecology (30)

- Everyday life (262)

- Finance (570)

- Health (440)

- Physics (510)

- Sports (105)

- Statistics (182)

- Other (182)

- Discover Omni (40)

If you're seeing this message, it means we're having trouble loading external resources on our website.

If you're behind a web filter, please make sure that the domains *.kastatic.org and *.kasandbox.org are unblocked.

To log in and use all the features of Khan Academy, please enable JavaScript in your browser.

Unit 12: Significance tests (hypothesis testing)

About this unit.

Significance tests give us a formal process for using sample data to evaluate the likelihood of some claim about a population value. Learn how to conduct significance tests and calculate p-values to see how likely a sample result is to occur by random chance. You'll also see how we use p-values to make conclusions about hypotheses.

The idea of significance tests

- Simple hypothesis testing (Opens a modal)

- Idea behind hypothesis testing (Opens a modal)

- Examples of null and alternative hypotheses (Opens a modal)

- P-values and significance tests (Opens a modal)

- Comparing P-values to different significance levels (Opens a modal)

- Estimating a P-value from a simulation (Opens a modal)

- Using P-values to make conclusions (Opens a modal)

- Simple hypothesis testing Get 3 of 4 questions to level up!

- Writing null and alternative hypotheses Get 3 of 4 questions to level up!

- Estimating P-values from simulations Get 3 of 4 questions to level up!

Error probabilities and power

- Introduction to Type I and Type II errors (Opens a modal)

- Type 1 errors (Opens a modal)

- Examples identifying Type I and Type II errors (Opens a modal)

- Introduction to power in significance tests (Opens a modal)

- Examples thinking about power in significance tests (Opens a modal)

- Consequences of errors and significance (Opens a modal)

- Type I vs Type II error Get 3 of 4 questions to level up!

- Error probabilities and power Get 3 of 4 questions to level up!

Tests about a population proportion

- Constructing hypotheses for a significance test about a proportion (Opens a modal)

- Conditions for a z test about a proportion (Opens a modal)

- Reference: Conditions for inference on a proportion (Opens a modal)

- Calculating a z statistic in a test about a proportion (Opens a modal)

- Calculating a P-value given a z statistic (Opens a modal)

- Making conclusions in a test about a proportion (Opens a modal)

- Writing hypotheses for a test about a proportion Get 3 of 4 questions to level up!

- Conditions for a z test about a proportion Get 3 of 4 questions to level up!

- Calculating the test statistic in a z test for a proportion Get 3 of 4 questions to level up!

- Calculating the P-value in a z test for a proportion Get 3 of 4 questions to level up!

- Making conclusions in a z test for a proportion Get 3 of 4 questions to level up!

Tests about a population mean

- Writing hypotheses for a significance test about a mean (Opens a modal)

- Conditions for a t test about a mean (Opens a modal)

- Reference: Conditions for inference on a mean (Opens a modal)

- When to use z or t statistics in significance tests (Opens a modal)

- Example calculating t statistic for a test about a mean (Opens a modal)

- Using TI calculator for P-value from t statistic (Opens a modal)

- Using a table to estimate P-value from t statistic (Opens a modal)

- Comparing P-value from t statistic to significance level (Opens a modal)

- Free response example: Significance test for a mean (Opens a modal)

- Writing hypotheses for a test about a mean Get 3 of 4 questions to level up!

- Conditions for a t test about a mean Get 3 of 4 questions to level up!

- Calculating the test statistic in a t test for a mean Get 3 of 4 questions to level up!

- Calculating the P-value in a t test for a mean Get 3 of 4 questions to level up!

- Making conclusions in a t test for a mean Get 3 of 4 questions to level up!

More significance testing videos

- Hypothesis testing and p-values (Opens a modal)

- One-tailed and two-tailed tests (Opens a modal)

- Z-statistics vs. T-statistics (Opens a modal)

- Small sample hypothesis test (Opens a modal)

- Large sample proportion hypothesis testing (Opens a modal)

- FOR INSTRUCTOR

- FOR INSTRUCTORS

8.4.3 Hypothesis Testing for the Mean

$\quad$ $H_0$: $\mu=\mu_0$, $\quad$ $H_1$: $\mu \neq \mu_0$.

$\quad$ $H_0$: $\mu \leq \mu_0$, $\quad$ $H_1$: $\mu > \mu_0$.

$\quad$ $H_0$: $\mu \geq \mu_0$, $\quad$ $H_1$: $\mu \lt \mu_0$.

Two-sided Tests for the Mean:

Therefore, we can suggest the following test. Choose a threshold, and call it $c$. If $|W| \leq c$, accept $H_0$, and if $|W|>c$, accept $H_1$. How do we choose $c$? If $\alpha$ is the required significance level, we must have

- As discussed above, we let \begin{align}%\label{} W(X_1,X_2, \cdots,X_n)=\frac{\overline{X}-\mu_0}{\sigma / \sqrt{n}}. \end{align} Note that, assuming $H_0$, $W \sim N(0,1)$. We will choose a threshold, $c$. If $|W| \leq c$, we accept $H_0$, and if $|W|>c$, accept $H_1$. To choose $c$, we let \begin{align} P(|W| > c \; | \; H_0) =\alpha. \end{align} Since the standard normal PDF is symmetric around $0$, we have \begin{align} P(|W| > c \; | \; H_0) = 2 P(W>c | \; H_0). \end{align} Thus, we conclude $P(W>c | \; H_0)=\frac{\alpha}{2}$. Therefore, \begin{align} c=z_{\frac{\alpha}{2}}. \end{align} Therefore, we accept $H_0$ if \begin{align} \left|\frac{\overline{X}-\mu_0}{\sigma / \sqrt{n}} \right| \leq z_{\frac{\alpha}{2}}, \end{align} and reject it otherwise.

- We have \begin{align} \beta (\mu) &=P(\textrm{type II error}) = P(\textrm{accept }H_0 \; | \; \mu) \\ &= P\left(\left|\frac{\overline{X}-\mu_0}{\sigma / \sqrt{n}} \right| \lt z_{\frac{\alpha}{2}}\; | \; \mu \right). \end{align} If $X_i \sim N(\mu,\sigma^2)$, then $\overline{X} \sim N(\mu, \frac{\sigma^2}{n})$. Thus, \begin{align} \beta (\mu)&=P\left(\left|\frac{\overline{X}-\mu_0}{\sigma / \sqrt{n}} \right| \lt z_{\frac{\alpha}{2}}\; | \; \mu \right)\\ &=P\left(\mu_0- z_{\frac{\alpha}{2}} \frac{\sigma}{\sqrt{n}} \leq \overline{X} \leq \mu_0+ z_{\frac{\alpha}{2}} \frac{\sigma}{\sqrt{n}}\right)\\ &=\Phi\left(z_{\frac{\alpha}{2}}+\frac{\mu_0-\mu}{\sigma / \sqrt{n}}\right)-\Phi\left(-z_{\frac{\alpha}{2}}+\frac{\mu_0-\mu}{\sigma / \sqrt{n}}\right). \end{align}

- Let $S^2$ be the sample variance for this random sample. Then, the random variable $W$ defined as \begin{equation} W(X_1,X_2, \cdots, X_n)=\frac{\overline{X}-\mu_0}{S / \sqrt{n}} \end{equation} has a $t$-distribution with $n-1$ degrees of freedom, i.e., $W \sim T(n-1)$. Thus, we can repeat the analysis of Example 8.24 here. The only difference is that we need to replace $\sigma$ by $S$ and $z_{\frac{\alpha}{2}}$ by $t_{\frac{\alpha}{2},n-1}$. Therefore, we accept $H_0$ if \begin{align} |W| \leq t_{\frac{\alpha}{2},n-1}, \end{align} and reject it otherwise. Let us look at a numerical example of this case.

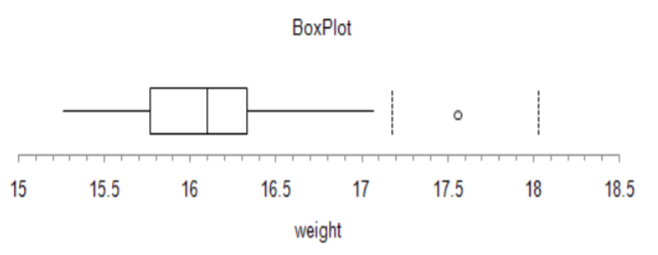

$\quad$ $H_0$: $\mu=170$, $\quad$ $H_1$: $\mu \neq 170$.

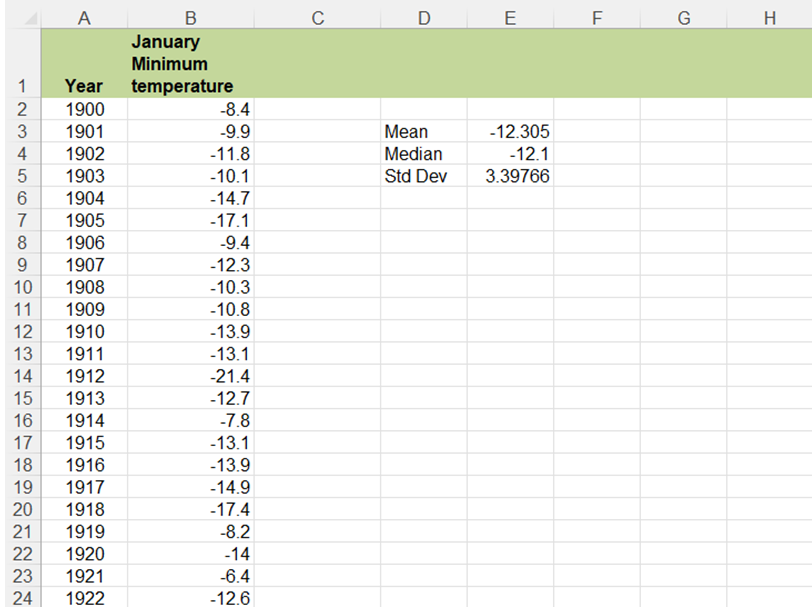

- Let's first compute the sample mean and the sample standard deviation. The sample mean is \begin{align}%\label{} \overline{X}&=\frac{X_1+X_2+X_3+X_4+X_5+X_6+X_7+X_8+X_9}{9}\\ &=165.8 \end{align} The sample variance is given by \begin{align}%\label{} {S}^2=\frac{1}{9-1} \sum_{k=1}^9 (X_k-\overline{X})^2&=68.01 \end{align} The sample standard deviation is given by \begin{align}%\label{} S&= \sqrt{S^2}=8.25 \end{align} The following MATLAB code can be used to obtain these values: x=[176.2,157.9,160.1,180.9,165.1,167.2,162.9,155.7,166.2]; m=mean(x); v=var(x); s=std(x); Now, our test statistic is \begin{align} W(X_1,X_2, \cdots, X_9)&=\frac{\overline{X}-\mu_0}{S / \sqrt{n}}\\ &=\frac{165.8-170}{8.25 / 3}=-1.52 \end{align} Thus, $|W|=1.52$. Also, we have \begin{align} t_{\frac{\alpha}{2},n-1} = t_{0.025,8} \approx 2.31 \end{align} The above value can be obtained in MATLAB using the command $\mathtt{tinv(0.975,8)}$. Thus, we conclude \begin{align} |W| \leq t_{\frac{\alpha}{2},n-1}. \end{align} Therefore, we accept $H_0$. In other words, we do not have enough evidence to conclude that the average height in the city is different from the average height in the country.

Let us summarize what we have obtained for the two-sided test for the mean.

One-sided Tests for the Mean:

- As before, we define the test statistic as \begin{align}%\label{} W(X_1,X_2, \cdots,X_n)=\frac{\overline{X}-\mu_0}{\sigma / \sqrt{n}}. \end{align} If $H_0$ is true (i.e., $\mu \leq \mu_0$), we expect $\overline{X}$ (and thus $W$) to be relatively small, while if $H_1$ is true, we expect $\overline{X}$ (and thus $W$) to be larger. This suggests the following test: Choose a threshold, and call it $c$. If $W \leq c$, accept $H_0$, and if $W>c$, accept $H_1$. How do we choose $c$? If $\alpha$ is the required significance level, we must have \begin{align} P(\textrm{type I error}) &= P(\textrm{Reject }H_0 \; | \; H_0) \\ &= P(W > c \; | \; \mu \leq \mu_0) \leq \alpha. \end{align} Here, the probability of type I error depends on $\mu$. More specifically, for any $\mu \leq \mu_0$, we can write \begin{align} P(\textrm{type I error} \; | \; \mu) &= P(\textrm{Reject }H_0 \; | \; \mu) \\ &= P(W > c \; | \; \mu)\\ &=P \left(\frac{\overline{X}-\mu_0}{\sigma / \sqrt{n}}> c \; | \; \mu\right)\\ &=P \left(\frac{\overline{X}-\mu}{\sigma / \sqrt{n}}+\frac{\mu-\mu_0}{\sigma / \sqrt{n}}> c \; | \; \mu\right)\\ &=P \left(\frac{\overline{X}-\mu}{\sigma / \sqrt{n}}> c+\frac{\mu_0-\mu}{\sigma / \sqrt{n}} \; | \; \mu\right)\\ &\leq P \left(\frac{\overline{X}-\mu}{\sigma / \sqrt{n}}> c \; | \; \mu\right) \quad (\textrm{ since }\mu \leq \mu_0)\\ &=1-\Phi(c) \quad \big(\textrm{ since given }\mu, \frac{\overline{X}-\mu}{\sigma / \sqrt{n}} \sim N(0,1) \big). \end{align} Thus, we can choose $\alpha=1-\Phi(c)$, which results in \begin{align} c=z_{\alpha}. \end{align} Therefore, we accept $H_0$ if \begin{align} \frac{\overline{X}-\mu_0}{\sigma / \sqrt{n}} \leq z_{\alpha}, \end{align} and reject it otherwise.

$\quad$ $H_0$: $\mu \geq \mu_0$, $\quad$ $H_1$: $\mu \lt \mu_0$,

- school Campus Bookshelves

- menu_book Bookshelves

- perm_media Learning Objects

- login Login

- how_to_reg Request Instructor Account

- hub Instructor Commons

- Download Page (PDF)

- Download Full Book (PDF)

- Periodic Table

- Physics Constants

- Scientific Calculator

- Reference & Cite

- Tools expand_more

- Readability

selected template will load here

This action is not available.

8.6: Hypothesis Test of a Single Population Mean with Examples

- Last updated

- Save as PDF

- Page ID 130297

Steps for performing Hypothesis Test of a Single Population Mean

Step 1: State your hypotheses about the population mean. Step 2: Summarize the data. State a significance level. State and check conditions required for the procedure

- Find or identify the sample size, n, the sample mean, \(\bar{x}\) and the sample standard deviation, s .

The sampling distribution for the one-mean test statistic is, approximately, T- distribution if the following conditions are met

- Sample is random with independent observations .

- Sample is large. The population must be Normal or the sample size must be at least 30.

Step 3: Perform the procedure based on the assumption that \(H_{0}\) is true

- Find the Estimated Standard Error: \(SE=\frac{s}{\sqrt{n}}\).

- Compute the observed value of the test statistic: \(T_{obs}=\frac{\bar{x}-\mu_{0}}{SE}\).

- Check the type of the test (right-, left-, or two-tailed)

- Find the p-value in order to measure your level of surprise.

Step 4: Make a decision about \(H_{0}\) and \(H_{a}\)

- Do you reject or not reject your null hypothesis?

Step 5: Make a conclusion

- What does this mean in the context of the data?

The following examples illustrate a left-, right-, and two-tailed test.

Example \(\pageindex{1}\).

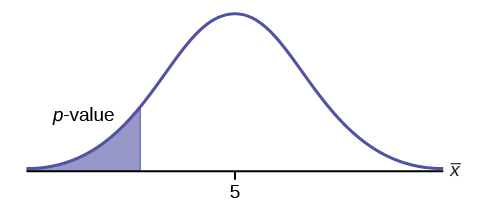

\(H_{0}: \mu = 5, H_{a}: \mu < 5\)

Test of a single population mean. \(H_{a}\) tells you the test is left-tailed. The picture of the \(p\)-value is as follows:

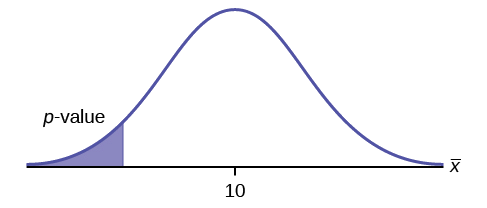

Exercise \(\PageIndex{1}\)

\(H_{0}: \mu = 10, H_{a}: \mu < 10\)

Assume the \(p\)-value is 0.0935. What type of test is this? Draw the picture of the \(p\)-value.

left-tailed test

Example \(\PageIndex{2}\)

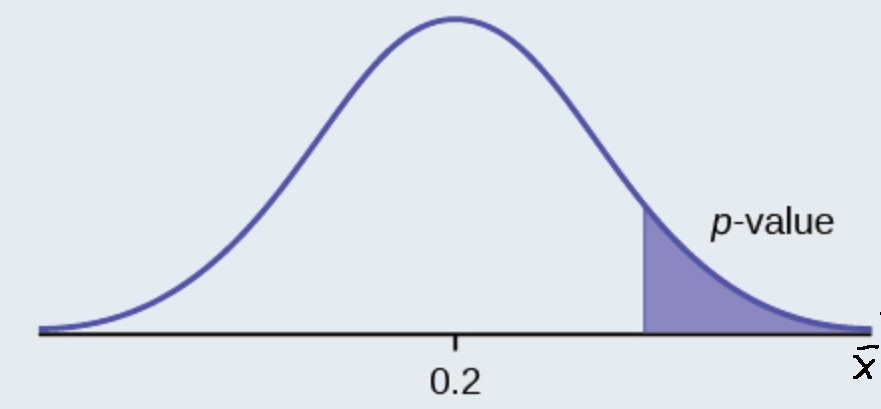

\(H_{0}: \mu \leq 0.2, H_{a}: \mu > 0.2\)

This is a test of a single population proportion. \(H_{a}\) tells you the test is right-tailed . The picture of the p -value is as follows:

Exercise \(\PageIndex{2}\)

\(H_{0}: \mu \leq 1, H_{a}: \mu > 1\)

Assume the \(p\)-value is 0.1243. What type of test is this? Draw the picture of the \(p\)-value.

right-tailed test

Example \(\PageIndex{3}\)

\(H_{0}: \mu = 50, H_{a}: \mu \neq 50\)

This is a test of a single population mean. \(H_{a}\) tells you the test is two-tailed . The picture of the \(p\)-value is as follows.

Exercise \(\PageIndex{3}\)

\(H_{0}: \mu = 0.5, H_{a}: \mu \neq 0.5\)

Assume the p -value is 0.2564. What type of test is this? Draw the picture of the \(p\)-value.

two-tailed test

Full Hypothesis Test Examples

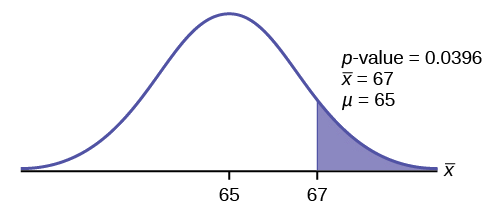

Example \(\pageindex{4}\).

Statistics students believe that the mean score on the first statistics test is 65. A statistics instructor thinks the mean score is higher than 65. He samples ten statistics students and obtains the scores 65 65 70 67 66 63 63 68 72 71. He performs a hypothesis test using a 5% level of significance. The data are assumed to be from a normal distribution.

Set up the hypothesis test:

A 5% level of significance means that \(\alpha = 0.05\). This is a test of a single population mean .

\(H_{0}: \mu = 65 H_{a}: \mu > 65\)

Since the instructor thinks the average score is higher, use a "\(>\)". The "\(>\)" means the test is right-tailed.

Determine the distribution needed:

Random variable: \(\bar{X} =\) average score on the first statistics test.

Distribution for the test: If you read the problem carefully, you will notice that there is no population standard deviation given . You are only given \(n = 10\) sample data values. Notice also that the data come from a normal distribution. This means that the distribution for the test is a student's \(t\).

Use \(t_{df}\). Therefore, the distribution for the test is \(t_{9}\) where \(n = 10\) and \(df = 10 - 1 = 9\).

The sample mean and sample standard deviation are calculated as 67 and 3.1972 from the data.

Calculate the \(p\)-value using the Student's \(t\)-distribution:

\[t_{obs} = \dfrac{\bar{x}-\mu_{\bar{x}}}{\left(\dfrac{s}{\sqrt{n}}\right)}=\dfrac{67-65}{\left(\dfrac{3.1972}{\sqrt{10}}\right)}\]

Use the T-table or Excel's t_dist() function to find p-value:

\(p\text{-value} = P(\bar{x} > 67) =P(T >1.9782 )= 1-0.9604=0.0396\)

Interpretation of the p -value: If the null hypothesis is true, then there is a 0.0396 probability (3.96%) that the sample mean is 65 or more.

Compare \(\alpha\) and the \(p-\text{value}\):

Since \(α = 0.05\) and \(p\text{-value} = 0.0396\). \(\alpha > p\text{-value}\).

Make a decision: Since \(\alpha > p\text{-value}\), reject \(H_{0}\).

This means you reject \(\mu = 65\). In other words, you believe the average test score is more than 65.

Conclusion: At a 5% level of significance, the sample data show sufficient evidence that the mean (average) test score is more than 65, just as the math instructor thinks.

The \(p\text{-value}\) can easily be calculated.

Put the data into a list. Press STAT and arrow over to TESTS . Press 2:T-Test . Arrow over to Data and press ENTER . Arrow down and enter 65 for \(\mu_{0}\), the name of the list where you put the data, and 1 for Freq: . Arrow down to \(\mu\): and arrow over to \(> \mu_{0}\). Press ENTER . Arrow down to Calculate and press ENTER . The calculator not only calculates the \(p\text{-value}\) (p = 0.0396) but it also calculates the test statistic ( t -score) for the sample mean, the sample mean, and the sample standard deviation. \(\mu > 65\) is the alternative hypothesis. Do this set of instructions again except arrow to Draw (instead of Calculate ). Press ENTER . A shaded graph appears with \(t = 1.9781\) (test statistic) and \(p = 0.0396\) (\(p\text{-value}\)). Make sure when you use Draw that no other equations are highlighted in \(Y =\) and the plots are turned off.

Exercise \(\PageIndex{4}\)

It is believed that a stock price for a particular company will grow at a rate of $5 per week with a standard deviation of $1. An investor believes the stock won’t grow as quickly. The changes in stock price is recorded for ten weeks and are as follows: $4, $3, $2, $3, $1, $7, $2, $1, $1, $2. Perform a hypothesis test using a 5% level of significance. State the null and alternative hypotheses, find the p -value, state your conclusion, and identify the Type I and Type II errors.

- \(H_{0}: \mu = 5\)

- \(H_{a}: \mu < 5\)

- \(p = 0.0082\)

Because \(p < \alpha\), we reject the null hypothesis. There is sufficient evidence to suggest that the stock price of the company grows at a rate less than $5 a week.

- Type I Error: To conclude that the stock price is growing slower than $5 a week when, in fact, the stock price is growing at $5 a week (reject the null hypothesis when the null hypothesis is true).

- Type II Error: To conclude that the stock price is growing at a rate of $5 a week when, in fact, the stock price is growing slower than $5 a week (do not reject the null hypothesis when the null hypothesis is false).

Example \(\PageIndex{5}\)

The National Institute of Standards and Technology provides exact data on conductivity properties of materials. Following are conductivity measurements for 11 randomly selected pieces of a particular type of glass.

1.11; 1.07; 1.11; 1.07; 1.12; 1.08; .98; .98 1.02; .95; .95

Is there convincing evidence that the average conductivity of this type of glass is greater than one? Use a significance level of 0.05. Assume the population is normal.

Let’s follow a four-step process to answer this statistical question.

- \(H_{0}: \mu \leq 1\)

- \(H_{a}: \mu > 1\)

- Plan : We are testing a sample mean without a known population standard deviation. Therefore, we need to use a Student's-t distribution. Assume the underlying population is normal.

- Do the calculations : \(p\text{-value} ( = 0.036)\)

4. State the Conclusions : Since the \(p\text{-value} (= 0.036)\) is less than our alpha value, we will reject the null hypothesis. It is reasonable to state that the data supports the claim that the average conductivity level is greater than one.

The hypothesis test itself has an established process. This can be summarized as follows:

- Determine \(H_{0}\) and \(H_{a}\). Remember, they are contradictory.

- Determine the random variable.

- Determine the distribution for the test.

- Draw a graph, calculate the test statistic, and use the test statistic to calculate the \(p\text{-value}\). (A t -score is an example of test statistics.)

- Compare the preconceived α with the p -value, make a decision (reject or do not reject H 0 ), and write a clear conclusion using English sentences.

Notice that in performing the hypothesis test, you use \(\alpha\) and not \(\beta\). \(\beta\) is needed to help determine the sample size of the data that is used in calculating the \(p\text{-value}\). Remember that the quantity \(1 – \beta\) is called the Power of the Test . A high power is desirable. If the power is too low, statisticians typically increase the sample size while keeping α the same.If the power is low, the null hypothesis might not be rejected when it should be.

- Data from Amit Schitai. Director of Instructional Technology and Distance Learning. LBCC.

- Data from Bloomberg Businessweek . Available online at www.businessweek.com/news/2011- 09-15/nyc-smoking-rate-falls-to-record-low-of-14-bloomberg-says.html.

- Data from energy.gov. Available online at http://energy.gov (accessed June 27. 2013).

- Data from Gallup®. Available online at www.gallup.com (accessed June 27, 2013).

- Data from Growing by Degrees by Allen and Seaman.

- Data from La Leche League International. Available online at www.lalecheleague.org/Law/BAFeb01.html.

- Data from the American Automobile Association. Available online at www.aaa.com (accessed June 27, 2013).

- Data from the American Library Association. Available online at www.ala.org (accessed June 27, 2013).

- Data from the Bureau of Labor Statistics. Available online at http://www.bls.gov/oes/current/oes291111.htm .

- Data from the Centers for Disease Control and Prevention. Available online at www.cdc.gov (accessed June 27, 2013)

- Data from the U.S. Census Bureau, available online at quickfacts.census.gov/qfd/states/00000.html (accessed June 27, 2013).

- Data from the United States Census Bureau. Available online at www.census.gov/hhes/socdemo/language/.

- Data from Toastmasters International. Available online at http://toastmasters.org/artisan/deta...eID=429&Page=1 .

- Data from Weather Underground. Available online at www.wunderground.com (accessed June 27, 2013).

- Federal Bureau of Investigations. “Uniform Crime Reports and Index of Crime in Daviess in the State of Kentucky enforced by Daviess County from 1985 to 2005.” Available online at http://www.disastercenter.com/kentucky/crime/3868.htm (accessed June 27, 2013).

- “Foothill-De Anza Community College District.” De Anza College, Winter 2006. Available online at research.fhda.edu/factbook/DA...t_da_2006w.pdf.

- Johansen, C., J. Boice, Jr., J. McLaughlin, J. Olsen. “Cellular Telephones and Cancer—a Nationwide Cohort Study in Denmark.” Institute of Cancer Epidemiology and the Danish Cancer Society, 93(3):203-7. Available online at http://www.ncbi.nlm.nih.gov/pubmed/11158188 (accessed June 27, 2013).

- Rape, Abuse & Incest National Network. “How often does sexual assault occur?” RAINN, 2009. Available online at www.rainn.org/get-information...sexual-assault (accessed June 27, 2013).

Statistics Tutorial

Descriptive statistics, inferential statistics, stat reference, statistics - hypothesis testing a mean.

A population mean is an average of value a population.

Hypothesis tests are used to check a claim about the size of that population mean.

Hypothesis Testing a Mean

The following steps are used for a hypothesis test:

- Check the conditions

- Define the claims

- Decide the significance level

- Calculate the test statistic

For example:

- Population : Nobel Prize winners

- Category : Age when they received the prize.

And we want to check the claim:

"The average age of Nobel Prize winners when they received the prize is more than 55"

By taking a sample of 30 randomly selected Nobel Prize winners we could find that:

The mean age in the sample (\(\bar{x}\)) is 62.1

The standard deviation of age in the sample (\(s\)) is 13.46

From this sample data we check the claim with the steps below.

1. Checking the Conditions

The conditions for calculating a confidence interval for a proportion are:

- The sample is randomly selected

- The population data is normally distributed

- Sample size is large enough

A moderately large sample size, like 30, is typically large enough.

In the example, the sample size was 30 and it was randomly selected, so the conditions are fulfilled.

Note: Checking if the data is normally distributed can be done with specialized statistical tests.

2. Defining the Claims

We need to define a null hypothesis (\(H_{0}\)) and an alternative hypothesis (\(H_{1}\)) based on the claim we are checking.

The claim was:

In this case, the parameter is the mean age of Nobel Prize winners when they received the prize (\(\mu\)).

The null and alternative hypothesis are then:

Null hypothesis : The average age was 55.

Alternative hypothesis : The average age was more than 55.

Which can be expressed with symbols as:

\(H_{0}\): \(\mu = 55 \)

\(H_{1}\): \(\mu > 55 \)

This is a ' right tailed' test, because the alternative hypothesis claims that the proportion is more than in the null hypothesis.

If the data supports the alternative hypothesis, we reject the null hypothesis and accept the alternative hypothesis.

Advertisement

3. Deciding the Significance Level

The significance level (\(\alpha\)) is the uncertainty we accept when rejecting the null hypothesis in a hypothesis test.

The significance level is a percentage probability of accidentally making the wrong conclusion.

Typical significance levels are:

- \(\alpha = 0.1\) (10%)

- \(\alpha = 0.05\) (5%)

- \(\alpha = 0.01\) (1%)

A lower significance level means that the evidence in the data needs to be stronger to reject the null hypothesis.

There is no "correct" significance level - it only states the uncertainty of the conclusion.

Note: A 5% significance level means that when we reject a null hypothesis:

We expect to reject a true null hypothesis 5 out of 100 times.

4. Calculating the Test Statistic

The test statistic is used to decide the outcome of the hypothesis test.

The test statistic is a standardized value calculated from the sample.

The formula for the test statistic (TS) of a population mean is:

\(\displaystyle \frac{\bar{x} - \mu}{s} \cdot \sqrt{n} \)

\(\bar{x}-\mu\) is the difference between the sample mean (\(\bar{x}\)) and the claimed population mean (\(\mu\)).

\(s\) is the sample standard deviation .

\(n\) is the sample size.

In our example:

The claimed (\(H_{0}\)) population mean (\(\mu\)) was \( 55 \)

The sample mean (\(\bar{x}\)) was \(62.1\)

The sample standard deviation (\(s\)) was \(13.46\)

The sample size (\(n\)) was \(30\)

So the test statistic (TS) is then:

\(\displaystyle \frac{62.1-55}{13.46} \cdot \sqrt{30} = \frac{7.1}{13.46} \cdot \sqrt{30} \approx 0.528 \cdot 5.477 = \underline{2.889}\)

You can also calculate the test statistic using programming language functions:

With Python use the scipy and math libraries to calculate the test statistic.

With R use built-in math and statistics functions to calculate the test statistic.

5. Concluding

There are two main approaches for making the conclusion of a hypothesis test:

- The critical value approach compares the test statistic with the critical value of the significance level.

- The P-value approach compares the P-value of the test statistic and with the significance level.

Note: The two approaches are only different in how they present the conclusion.

The Critical Value Approach

For the critical value approach we need to find the critical value (CV) of the significance level (\(\alpha\)).

For a population mean test, the critical value (CV) is a T-value from a student's t-distribution .

This critical T-value (CV) defines the rejection region for the test.

The rejection region is an area of probability in the tails of the standard normal distribution.

Because the claim is that the population mean is more than 55, the rejection region is in the right tail:

The student's t-distribution is adjusted for the uncertainty from smaller samples.

This adjustment is called degrees of freedom (df), which is the sample size \((n) - 1\)

In this case the degrees of freedom (df) is: \(30 - 1 = \underline{29} \)

Choosing a significance level (\(\alpha\)) of 0.01, or 1%, we can find the critical T-value from a T-table , or with a programming language function:

With Python use the Scipy Stats library t.ppf() function find the T-Value for an \(\alpha\) = 0.01 at 29 degrees of freedom (df).

With R use the built-in qt() function to find the t-value for an \(\alpha\) = 0.01 at 29 degrees of freedom (df).

Using either method we can find that the critical T-Value is \(\approx \underline{2.462}\)

For a right tailed test we need to check if the test statistic (TS) is bigger than the critical value (CV).

If the test statistic is bigger than the critical value, the test statistic is in the rejection region .

When the test statistic is in the rejection region, we reject the null hypothesis (\(H_{0}\)).

Here, the test statistic (TS) was \(\approx \underline{2.889}\) and the critical value was \(\approx \underline{2.462}\)

Here is an illustration of this test in a graph:

Since the test statistic was bigger than the critical value we reject the null hypothesis.

This means that the sample data supports the alternative hypothesis.

And we can summarize the conclusion stating:

The sample data supports the claim that "The average age of Nobel Prize winners when they received the prize is more than 55" at a 1% significance level .

The P-Value Approach

For the P-value approach we need to find the P-value of the test statistic (TS).

If the P-value is smaller than the significance level (\(\alpha\)), we reject the null hypothesis (\(H_{0}\)).

The test statistic was found to be \( \approx \underline{2.889} \)

For a population proportion test, the test statistic is a T-Value from a student's t-distribution .

Because this is a right tailed test, we need to find the P-value of a t-value bigger than 2.889.

The student's t-distribution is adjusted according to degrees of freedom (df), which is the sample size \((30) - 1 = \underline{29}\)

We can find the P-value using a T-table , or with a programming language function:

With Python use the Scipy Stats library t.cdf() function find the P-value of a T-value bigger than 2.889 at 29 degrees of freedom (df):

With R use the built-in pt() function find the P-value of a T-Value bigger than 2.889 at 29 degrees of freedom (df):

Using either method we can find that the P-value is \(\approx \underline{0.0036}\)

This tells us that the significance level (\(\alpha\)) would need to be bigger than 0.0036, or 0.36%, to reject the null hypothesis.

This P-value is smaller than any of the common significance levels (10%, 5%, 1%).

So the null hypothesis is rejected at all of these significance levels.

The sample data supports the claim that "The average age of Nobel Prize winners when they received the prize is more than 55" at a 10%, 5%, or 1% significance level .

Note: An outcome of an hypothesis test that rejects the null hypothesis with a p-value of 0.36% means:

For this p-value, we only expect to reject a true null hypothesis 36 out of 10000 times.

Calculating a P-Value for a Hypothesis Test with Programming

Many programming languages can calculate the P-value to decide outcome of a hypothesis test.

Using software and programming to calculate statistics is more common for bigger sets of data, as calculating manually becomes difficult.

The P-value calculated here will tell us the lowest possible significance level where the null-hypothesis can be rejected.

With Python use the scipy and math libraries to calculate the P-value for a right tailed hypothesis test for a mean.

Here, the sample size is 30, the sample mean is 62.1, the sample standard deviation is 13.46, and the test is for a mean bigger than 55.

With R use built-in math and statistics functions find the P-value for a right tailed hypothesis test for a mean.

Left-Tailed and Two-Tailed Tests

This was an example of a right tailed test, where the alternative hypothesis claimed that parameter is bigger than the null hypothesis claim.

You can check out an equivalent step-by-step guide for other types here:

- Left-Tailed Test

- Two-Tailed Test

COLOR PICKER

Contact Sales

If you want to use W3Schools services as an educational institution, team or enterprise, send us an e-mail: [email protected]

Report Error

If you want to report an error, or if you want to make a suggestion, send us an e-mail: [email protected]

Top Tutorials

Top references, top examples, get certified.

- 1-800-234-2933

- [email protected]

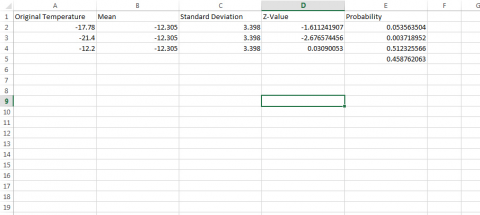

Hypothesis testing for the mean Calculator

State the null and alternative hypothesis:

Calculate our test statistic z:, determine rejection region:, you have 2 free calculationss remaining, what is the answer, how does the hypothesis testing for the mean calculator work, what 1 formula is used for the hypothesis testing for the mean calculator, what 7 concepts are covered in the hypothesis testing for the mean calculator.

- alternative hypothesis

- hypothesis testing for the mean

- null hypothesis

- test statistic

An Automated Online Math Tutor serving 8.1 million parents and students in 235 countries and territories.

Our Services

- All Courses

- Our Experts

Top Categories

- Development

- Photography

- Refer a Friend

- Festival Offer

- Limited Membership

- Scholarship

Hypothesis Testing for Means & Proportions

Lisa Sullivan, PhD

Professor of Biostatistics

Boston University School of Public Health

Introduction

This is the first of three modules that will addresses the second area of statistical inference, which is hypothesis testing, in which a specific statement or hypothesis is generated about a population parameter, and sample statistics are used to assess the likelihood that the hypothesis is true. The hypothesis is based on available information and the investigator's belief about the population parameters. The process of hypothesis testing involves setting up two competing hypotheses, the null hypothesis and the alternate hypothesis. One selects a random sample (or multiple samples when there are more comparison groups), computes summary statistics and then assesses the likelihood that the sample data support the research or alternative hypothesis. Similar to estimation, the process of hypothesis testing is based on probability theory and the Central Limit Theorem.

This module will focus on hypothesis testing for means and proportions. The next two modules in this series will address analysis of variance and chi-squared tests.

Learning Objectives

After completing this module, the student will be able to:

- Define null and research hypothesis, test statistic, level of significance and decision rule

- Distinguish between Type I and Type II errors and discuss the implications of each

- Explain the difference between one and two sided tests of hypothesis

- Estimate and interpret p-values

- Explain the relationship between confidence interval estimates and p-values in drawing inferences

- Differentiate hypothesis testing procedures based on type of outcome variable and number of sample

Introduction to Hypothesis Testing

Techniques for hypothesis testing .

The techniques for hypothesis testing depend on

- the type of outcome variable being analyzed (continuous, dichotomous, discrete)

- the number of comparison groups in the investigation

- whether the comparison groups are independent (i.e., physically separate such as men versus women) or dependent (i.e., matched or paired such as pre- and post-assessments on the same participants).

In estimation we focused explicitly on techniques for one and two samples and discussed estimation for a specific parameter (e.g., the mean or proportion of a population), for differences (e.g., difference in means, the risk difference) and ratios (e.g., the relative risk and odds ratio). Here we will focus on procedures for one and two samples when the outcome is either continuous (and we focus on means) or dichotomous (and we focus on proportions).

General Approach: A Simple Example

The Centers for Disease Control (CDC) reported on trends in weight, height and body mass index from the 1960's through 2002. 1 The general trend was that Americans were much heavier and slightly taller in 2002 as compared to 1960; both men and women gained approximately 24 pounds, on average, between 1960 and 2002. In 2002, the mean weight for men was reported at 191 pounds. Suppose that an investigator hypothesizes that weights are even higher in 2006 (i.e., that the trend continued over the subsequent 4 years). The research hypothesis is that the mean weight in men in 2006 is more than 191 pounds. The null hypothesis is that there is no change in weight, and therefore the mean weight is still 191 pounds in 2006.

In order to test the hypotheses, we select a random sample of American males in 2006 and measure their weights. Suppose we have resources available to recruit n=100 men into our sample. We weigh each participant and compute summary statistics on the sample data. Suppose in the sample we determine the following:

Do the sample data support the null or research hypothesis? The sample mean of 197.1 is numerically higher than 191. However, is this difference more than would be expected by chance? In hypothesis testing, we assume that the null hypothesis holds until proven otherwise. We therefore need to determine the likelihood of observing a sample mean of 197.1 or higher when the true population mean is 191 (i.e., if the null hypothesis is true or under the null hypothesis). We can compute this probability using the Central Limit Theorem. Specifically,

(Notice that we use the sample standard deviation in computing the Z score. This is generally an appropriate substitution as long as the sample size is large, n > 30. Thus, there is less than a 1% probability of observing a sample mean as large as 197.1 when the true population mean is 191. Do you think that the null hypothesis is likely true? Based on how unlikely it is to observe a sample mean of 197.1 under the null hypothesis (i.e., <1% probability), we might infer, from our data, that the null hypothesis is probably not true.

Suppose that the sample data had turned out differently. Suppose that we instead observed the following in 2006:

How likely it is to observe a sample mean of 192.1 or higher when the true population mean is 191 (i.e., if the null hypothesis is true)? We can again compute this probability using the Central Limit Theorem. Specifically,

There is a 33.4% probability of observing a sample mean as large as 192.1 when the true population mean is 191. Do you think that the null hypothesis is likely true?

Neither of the sample means that we obtained allows us to know with certainty whether the null hypothesis is true or not. However, our computations suggest that, if the null hypothesis were true, the probability of observing a sample mean >197.1 is less than 1%. In contrast, if the null hypothesis were true, the probability of observing a sample mean >192.1 is about 33%. We can't know whether the null hypothesis is true, but the sample that provided a mean value of 197.1 provides much stronger evidence in favor of rejecting the null hypothesis, than the sample that provided a mean value of 192.1. Note that this does not mean that a sample mean of 192.1 indicates that the null hypothesis is true; it just doesn't provide compelling evidence to reject it.

In essence, hypothesis testing is a procedure to compute a probability that reflects the strength of the evidence (based on a given sample) for rejecting the null hypothesis. In hypothesis testing, we determine a threshold or cut-off point (called the critical value) to decide when to believe the null hypothesis and when to believe the research hypothesis. It is important to note that it is possible to observe any sample mean when the true population mean is true (in this example equal to 191), but some sample means are very unlikely. Based on the two samples above it would seem reasonable to believe the research hypothesis when x̄ = 197.1, but to believe the null hypothesis when x̄ =192.1. What we need is a threshold value such that if x̄ is above that threshold then we believe that H 1 is true and if x̄ is below that threshold then we believe that H 0 is true. The difficulty in determining a threshold for x̄ is that it depends on the scale of measurement. In this example, the threshold, sometimes called the critical value, might be 195 (i.e., if the sample mean is 195 or more then we believe that H 1 is true and if the sample mean is less than 195 then we believe that H 0 is true). Suppose we are interested in assessing an increase in blood pressure over time, the critical value will be different because blood pressures are measured in millimeters of mercury (mmHg) as opposed to in pounds. In the following we will explain how the critical value is determined and how we handle the issue of scale.

First, to address the issue of scale in determining the critical value, we convert our sample data (in particular the sample mean) into a Z score. We know from the module on probability that the center of the Z distribution is zero and extreme values are those that exceed 2 or fall below -2. Z scores above 2 and below -2 represent approximately 5% of all Z values. If the observed sample mean is close to the mean specified in H 0 (here m =191), then Z will be close to zero. If the observed sample mean is much larger than the mean specified in H 0 , then Z will be large.

In hypothesis testing, we select a critical value from the Z distribution. This is done by first determining what is called the level of significance, denoted α ("alpha"). What we are doing here is drawing a line at extreme values. The level of significance is the probability that we reject the null hypothesis (in favor of the alternative) when it is actually true and is also called the Type I error rate.

α = Level of significance = P(Type I error) = P(Reject H 0 | H 0 is true).

Because α is a probability, it ranges between 0 and 1. The most commonly used value in the medical literature for α is 0.05, or 5%. Thus, if an investigator selects α=0.05, then they are allowing a 5% probability of incorrectly rejecting the null hypothesis in favor of the alternative when the null is in fact true. Depending on the circumstances, one might choose to use a level of significance of 1% or 10%. For example, if an investigator wanted to reject the null only if there were even stronger evidence than that ensured with α=0.05, they could choose a =0.01as their level of significance. The typical values for α are 0.01, 0.05 and 0.10, with α=0.05 the most commonly used value.

Suppose in our weight study we select α=0.05. We need to determine the value of Z that holds 5% of the values above it (see below).

The critical value of Z for α =0.05 is Z = 1.645 (i.e., 5% of the distribution is above Z=1.645). With this value we can set up what is called our decision rule for the test. The rule is to reject H 0 if the Z score is 1.645 or more.

With the first sample we have

Because 2.38 > 1.645, we reject the null hypothesis. (The same conclusion can be drawn by comparing the 0.0087 probability of observing a sample mean as extreme as 197.1 to the level of significance of 0.05. If the observed probability is smaller than the level of significance we reject H 0 ). Because the Z score exceeds the critical value, we conclude that the mean weight for men in 2006 is more than 191 pounds, the value reported in 2002. If we observed the second sample (i.e., sample mean =192.1), we would not be able to reject the null hypothesis because the Z score is 0.43 which is not in the rejection region (i.e., the region in the tail end of the curve above 1.645). With the second sample we do not have sufficient evidence (because we set our level of significance at 5%) to conclude that weights have increased. Again, the same conclusion can be reached by comparing probabilities. The probability of observing a sample mean as extreme as 192.1 is 33.4% which is not below our 5% level of significance.

Hypothesis Testing: Upper-, Lower, and Two Tailed Tests

The procedure for hypothesis testing is based on the ideas described above. Specifically, we set up competing hypotheses, select a random sample from the population of interest and compute summary statistics. We then determine whether the sample data supports the null or alternative hypotheses. The procedure can be broken down into the following five steps.

- Step 1. Set up hypotheses and select the level of significance α.

H 0 : Null hypothesis (no change, no difference);

H 1 : Research hypothesis (investigator's belief); α =0.05

- Step 2. Select the appropriate test statistic.

The test statistic is a single number that summarizes the sample information. An example of a test statistic is the Z statistic computed as follows:

When the sample size is small, we will use t statistics (just as we did when constructing confidence intervals for small samples). As we present each scenario, alternative test statistics are provided along with conditions for their appropriate use.

- Step 3. Set up decision rule.

The decision rule is a statement that tells under what circumstances to reject the null hypothesis. The decision rule is based on specific values of the test statistic (e.g., reject H 0 if Z > 1.645). The decision rule for a specific test depends on 3 factors: the research or alternative hypothesis, the test statistic and the level of significance. Each is discussed below.

- The decision rule depends on whether an upper-tailed, lower-tailed, or two-tailed test is proposed. In an upper-tailed test the decision rule has investigators reject H 0 if the test statistic is larger than the critical value. In a lower-tailed test the decision rule has investigators reject H 0 if the test statistic is smaller than the critical value. In a two-tailed test the decision rule has investigators reject H 0 if the test statistic is extreme, either larger than an upper critical value or smaller than a lower critical value.

- The exact form of the test statistic is also important in determining the decision rule. If the test statistic follows the standard normal distribution (Z), then the decision rule will be based on the standard normal distribution. If the test statistic follows the t distribution, then the decision rule will be based on the t distribution. The appropriate critical value will be selected from the t distribution again depending on the specific alternative hypothesis and the level of significance.

- The third factor is the level of significance. The level of significance which is selected in Step 1 (e.g., α =0.05) dictates the critical value. For example, in an upper tailed Z test, if α =0.05 then the critical value is Z=1.645.

The following figures illustrate the rejection regions defined by the decision rule for upper-, lower- and two-tailed Z tests with α=0.05. Notice that the rejection regions are in the upper, lower and both tails of the curves, respectively. The decision rules are written below each figure.

Rejection Region for Lower-Tailed Z Test (H 1 : μ < μ 0 ) with α =0.05

The decision rule is: Reject H 0 if Z < 1.645.

Rejection Region for Two-Tailed Z Test (H 1 : μ ≠ μ 0 ) with α =0.05

The decision rule is: Reject H 0 if Z < -1.960 or if Z > 1.960.

The complete table of critical values of Z for upper, lower and two-tailed tests can be found in the table of Z values to the right in "Other Resources."

Critical values of t for upper, lower and two-tailed tests can be found in the table of t values in "Other Resources."

- Step 4. Compute the test statistic.

Here we compute the test statistic by substituting the observed sample data into the test statistic identified in Step 2.

- Step 5. Conclusion.

The final conclusion is made by comparing the test statistic (which is a summary of the information observed in the sample) to the decision rule. The final conclusion will be either to reject the null hypothesis (because the sample data are very unlikely if the null hypothesis is true) or not to reject the null hypothesis (because the sample data are not very unlikely).

If the null hypothesis is rejected, then an exact significance level is computed to describe the likelihood of observing the sample data assuming that the null hypothesis is true. The exact level of significance is called the p-value and it will be less than the chosen level of significance if we reject H 0 .

Statistical computing packages provide exact p-values as part of their standard output for hypothesis tests. In fact, when using a statistical computing package, the steps outlined about can be abbreviated. The hypotheses (step 1) should always be set up in advance of any analysis and the significance criterion should also be determined (e.g., α =0.05). Statistical computing packages will produce the test statistic (usually reporting the test statistic as t) and a p-value. The investigator can then determine statistical significance using the following: If p < α then reject H 0 .

- Step 1. Set up hypotheses and determine level of significance

H 0 : μ = 191 H 1 : μ > 191 α =0.05

The research hypothesis is that weights have increased, and therefore an upper tailed test is used.

- Step 2. Select the appropriate test statistic.

Because the sample size is large (n > 30) the appropriate test statistic is

- Step 3. Set up decision rule.

In this example, we are performing an upper tailed test (H 1 : μ> 191), with a Z test statistic and selected α =0.05. Reject H 0 if Z > 1.645.

We now substitute the sample data into the formula for the test statistic identified in Step 2.